什么是 ingress?

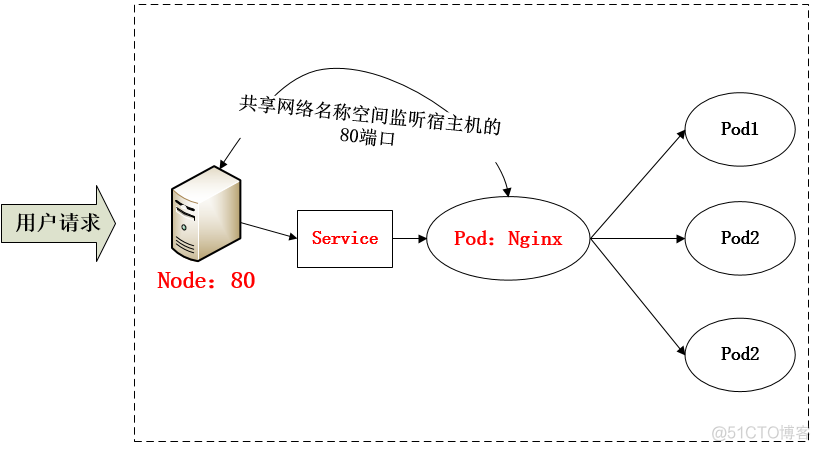

采用 NodePort 方式暴露服务面临问题是,服务一旦多起来,NodePort 在每个节点上开启的端口会及其庞大,而且难以维护;这时,我们可以能否使用一个Nginx直接对内进行转发呢?众所周知的是,Pod与Pod之间是可以互相通信的,而Pod是可以共享宿主机的网络名称空间的,也就是说当在共享网络名称空间时,Pod上所监听的就是Node上的端口。那么这又该如何实现呢?简单的实现就是使用 DaemonSet 在每个 Node 上监听 80,然后写好规则,因为 Nginx 外面绑定了宿主机 80 端口(就像 NodePort),本身又在集群内,那么向后直接转发到相应 Service IP 就行了,如下图所示:

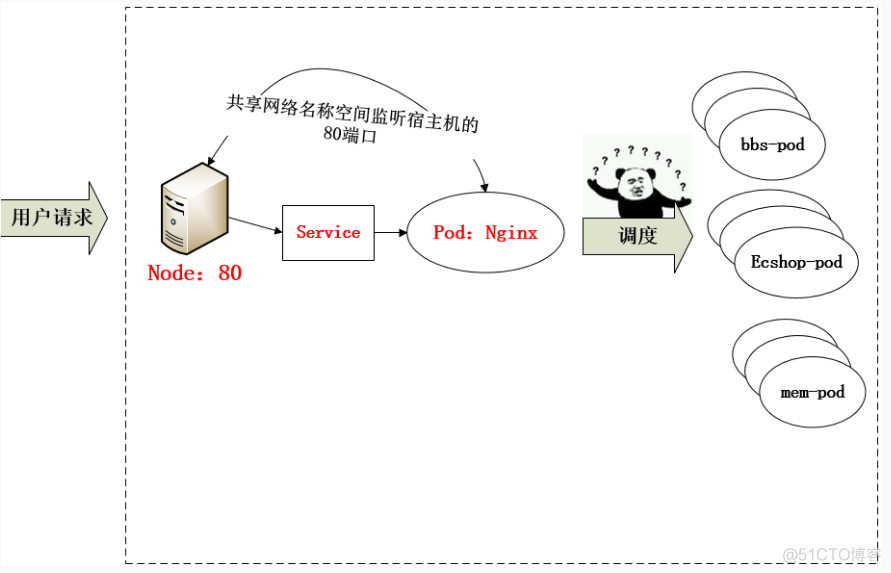

从上面的方法,采用 Nginx-Pod 似乎已经解决了问题,但是其实这里面有一个很大缺陷:当每次有新服务加入又该如何修改 Nginx 配置呢??我们知道使用Nginx可以通过虚拟主机域名进行区分不同的服务,而每个服务通过upstream进行定义不同的负载均衡池,再加上location进行负载均衡的反向代理,在日常使用中只需要修改nginx.conf即可实现,那在K8S中又该如何实现这种方式的调度呢???

假设后端的服务初始服务只有ecshop,后面增加了bbs和member服务,那么又该如何将这2个服务加入到Nginx-Pod进行调度呢?总不能每次手动改或者Rolling Update 前端 Nginx Pod 吧!!此时 Ingress 出现了,如果不算上面的Nginx,Ingress 包含两大组件:Ingress Controller 和 Ingress。

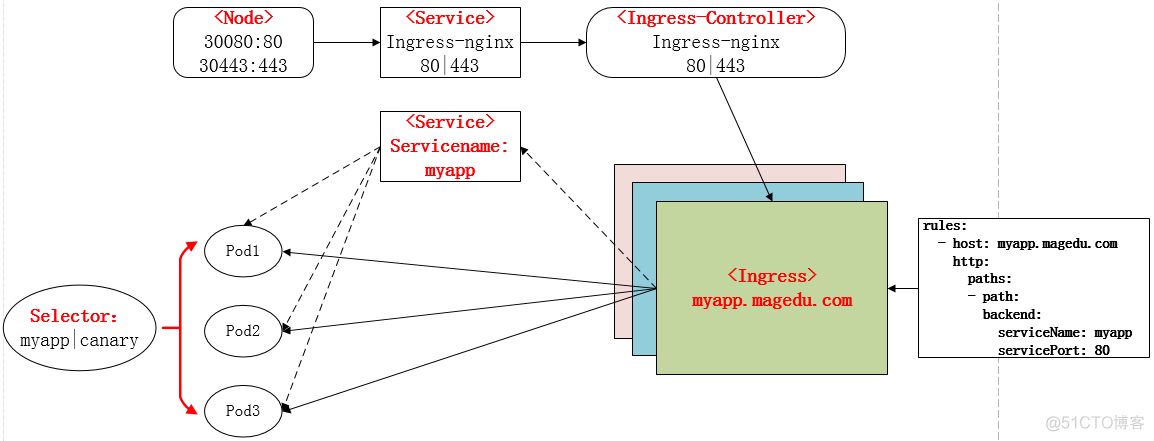

Ingress 简单的理解就是你原来需要改 Nginx 配置,然后配置各种域名对应哪个 Service,现在把这个动作抽象出来,变成一个 Ingress 对象,你可以用 yaml 创建,每次不要去改 Nginx 了,直接改 yaml 然后创建/更新就行了;那么问题来了:”Nginx 该怎么处理?”

Ingress Controller 这东西就是解决 “Nginx 的处理方式” 的;Ingress Controoler 通过与 Kubernetes API 交互,动态的去感知集群中 Ingress 规则变化,然后读取他,按照他自己模板生成一段 Nginx 配置,再写到 Nginx Pod 里,最后 reload 一下,工作流程如下图:

实际上Ingress也是Kubernetes API的标准资源类型之一,它其实就是一组基于DNS名称(host)或URL路径把请求转发到指定的Service资源的规则。用于将集群外部的请求流量转发到集群内部完成的服务发布。我们需要明白的是,Ingress资源自身不能进行“流量穿透”,仅仅是一组规则的集合,这些集合规则还需要其他功能的辅助,比如监听某套接字,然后根据这些规则的匹配进行路由转发,这些能够为Ingress资源监听套接字并将流量转发的组件就是Ingress Controller。

PS:Ingress 控制器不同于Deployment 控制器的是,Ingress控制器不直接运行为kube-controller-manager的一部分,它仅仅是Kubernetes集群的一个附件,类似于CoreDNS,需要在集群上单独部署。

如何创建Ingress资源

Ingress资源时基于HTTP虚拟主机或URL的转发规则,需要强调的是,这是一条转发规则。它在资源配置清单中的spec字段中嵌套了rules、backend和tls等字段进行定义。如下示例中定义了一个Ingress资源,其包含了一个转发规则:将发往myapp.quwenqing.com的请求,代理给一个名字为myapp的Service资源。

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: ingress-myapp

namespace: default

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

ingressClassName: nginx

rules:

- host: myapp.quwenqing.com

http:

paths:

- path:

backend:

serviceName: myapp

servicePort: 80Ingress 中的spec字段是Ingress资源的核心组成部分,主要包含以下3个字段:

- rules:用于定义当前Ingress资源的转发规则列表;由rules定义规则,或没有匹配到规则时,所有的流量会转发到由backend定义的默认后端。

- backend:默认的后端用于服务那些没有匹配到任何规则的请求;定义Ingress资源时,必须要定义backend或rules两者之一,该字段用于让负载均衡器指定一个全局默认的后端。

- tls:TLS配置,目前仅支持通过默认端口443提供服务,如果要配置指定的列表成员指向不同的主机,则需要通过SNI TLS扩展机制来支持该功能。

backend对象的定义由2个必要的字段组成:serviceName和servicePort,分别用于指定流量转发的后端目标Service资源名称和端口。

rules对象由一系列的配置的Ingress资源的host规则组成,这些host规则用于将一个主机上的某个URL映射到相关后端Service对象,其定义格式如下:

spec:

rules:

- host:

http:

paths:

- path:

backend:

serviceName:

servicePort: 需要注意的是,.spec.rules.host属性值,目前暂不支持使用IP地址定义,也不支持IP:Port的格式,该字段留空,代表着通配所有主机名。

tls对象由2个内嵌的字段组成,仅在定义TLS主机的转发规则上使用。

- hosts: 包含 于 使用 的 TLS 证书 之内 的 主机 名称 字符串 列表, 因此, 此处 使用 的 主机 名 必须 匹配 tlsSecret 中的 名称。

- secretName: 用于 引用 SSL 会话 的 secret 对象 名称, 在 基于 SNI 实现 多 主机 路 由 的 场景 中, 此 字段 为 可选。

Ingress Nginx部署

使用Ingress功能步骤:

1、安装部署ingress controller

2、部署后端服务

3、部署ingress-nginx service

4、部署ingress

从前面的描述我们知道,Ingress 可以使用 yaml 的方式进行创建,从而得知 Ingress 也是标准的 K8S 资源,其定义的方式,也可以使用 explain 进行查看:

1、部署Ingress controller

(1)下载ingress相关的yaml

# curl -k -o ingress-nginx.yaml https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.3.0/deploy/static/provider/cloud/deploy.yaml

(2)部署方法选择

https://github.com/kubernetes/ingress-nginx/blob/main/docs/deploy/baremetal.md

- A pure software solution: MetalLB(未实测)

- Over a NodePort Service

- Via the host network

- Using a self-provisioned edge

- External IPs(该方式导致集群异常,未找到原因)

Using a self-provisioned edge 是在 Over a NodePort Service 前面部署一套代理服务,例如Nginx、HaProxy等,代理转发到内部服务。

Via the host network 是直接将POD的80/443端口暴露到node主机网络,因为没有经过NodePort的NAT,性能会高些。

Over a NodePort Service 方式配置调整:

因为ClusterIP或者NodePort在集群中是随机分配的,需要固化其中一个

Over a NodePort Service 方式适合前端使用LVS服务转发到NodePort,建议固化nodePort比较合适。

修改 ingress-nginx-controller 的 Service 部分,增加 nodePort

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.3.0

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

externalTrafficPolicy: Local

ports:

- appProtocol: http

name: http

port: 80

protocol: TCP

targetPort: http

nodePort: 31080 # 新增

- appProtocol: https

name: https

port: 443

protocol: TCP

targetPort: https

nodePort: 31443 # 新增

selector:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

type: LoadBalancer基于可靠性考虑,在Deployment部分增加副本数 replicas: 2 配置

Using a self-provisioned edge 方式配置调整:

因为ClusterIP或者NodePort在集群中是随机分配的,需要固化其中一个

Using a self-provisioned edge 方式需要前端部署代理服务,建议固化clusterIp比较合适。

修改 ingress-nginx-controller 的 Service 部分,增加 clusterIp

apiVersion: v1

kind: Service

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.3.0

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

externalTrafficPolicy: Local

clusterIP: 172.16.16.16 # 新增

ports:

- appProtocol: http

name: http

port: 80

protocol: TCP

targetPort: http

- appProtocol: https

name: https

port: 443

protocol: TCP

targetPort: https

selector:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

type: LoadBalancer基于可靠性考虑,在Deployment部分增加副本数 replicas: 2 配置

Via the host network 方式配置调整:

DaemonSet介绍:https://kubernetes.io/docs/concepts/workloads/controllers/daemonset/

apiVersion: apps/v1

kind: DaemonSet # Deployment 改为 DaemonSet,避免调度到同一node导致端口冲突

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

app.kubernetes.io/version: 1.3.0

name: ingress-nginx-controller

namespace: ingress-nginx

spec:

minReadySeconds: 0

revisionHistoryLimit: 10

selector:

matchLabels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/component: controller

app.kubernetes.io/instance: ingress-nginx

app.kubernetes.io/name: ingress-nginx

spec:

hostNetwork: true # 新增

containers:

- args:

- /nginx-ingress-controller

- --publish-service=$(POD_NAMESPACE)/ingress-nginx-controller

- --election-id=ingress-controller-leader

- --controller-class=k8s.io/ingress-nginx

- --ingress-class=nginx

- --configmap=$(POD_NAMESPACE)/ingress-nginx-controller

- --validating-webhook=:8443

- --validating-webhook-certificate=/usr/local/certificates/cert

- --validating-webhook-key=/usr/local/certificates/key

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: LD_PRELOAD

value: /usr/local/lib/libmimalloc.so

image: harbor.1360.com/ingress-nginx/controller:v1.3.0

imagePullPolicy: IfNotPresent

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

livenessProbe:

failureThreshold: 5

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: controller

ports:

- containerPort: 80

name: http

protocol: TCP

- containerPort: 443

name: https

protocol: TCP

- containerPort: 8443

name: webhook

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

requests:

cpu: 100m

memory: 90Mi

securityContext:

allowPrivilegeEscalation: true

capabilities:

add:

- NET_BIND_SERVICE

drop:

- ALL

runAsUser: 101

volumeMounts:

- mountPath: /usr/local/certificates/

name: webhook-cert

readOnly: true

dnsPolicy: ClusterFirst

nodeSelector:

kubernetes.io/os: linux

serviceAccountName: ingress-nginx

terminationGracePeriodSeconds: 300

volumes:

- name: webhook-cert

secret:

secretName: ingress-nginx-admission(3)创建ingress controller的pod

# kubectl apply -f ingress-nginx.yaml

...

[root@k8s-master ingress-nginx]# kubectl get pod -n ingress-nginx -w

NAME READY STATUS RESTARTS AGE

ingress-nginx-controller-2vq76 1/1 Running 0 30s

ingress-nginx-controller-n5kq8 1/1 Running 0 30s测试访问

# kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

ingress-nginx-controller LoadBalancer 172.16.16.16 80:31080/TCP,443:31443/TCP 113m

ingress-nginx-controller-admission ClusterIP 172.16.229.95 443/TCP 5h53m 此时访问:172.16.16.16

此时应该是404 ,调度器是正常工作的,但是后端服务没有关联

# curl -v 172.16.16.16

* Rebuilt URL to: 172.16.16.16/

* Trying 172.16.16.16...

* TCP_NODELAY set

* Connected to 172.16.16.16 (172.16.16.16) port 80 (#0)

> GET / HTTP/1.1

> Host: 172.16.16.16

> User-Agent: curl/7.51.0

> Accept: */*

>

< HTTP/1.1 404 Not Found

< Date: Fri, 12 Aug 2022 03:38:36 GMT

< Content-Type: text/html

< Content-Length: 146

< Connection: keep-alive

<

<html>

<head><title>404 Not Found</title></head>

<body>

<center><h1>404 Not Found</h1></center>

<hr><center>nginx</center>

</body>

</html>

* Curl_http_done: called premature == 0

* Connection #0 to host 172.16.16.16 left intact

2、部署后端服务

(1)查看ingress的配置清单选项

[root@k8s-master ingress-nginx]# kubectl explain ingress.spec

KIND: Ingress

VERSION: extensions/v1beta1

...(2)部署后端服务

# vim hostnames.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: hostnames

spec:

selector:

matchLabels:

app: hostnames

replicas: 3

template:

metadata:

labels:

app: hostnames

spec:

containers:

- name: hostnames

image: k8s.gcr.io/serve_hostname

resources:

limits:

memory: "128Mi"

cpu: "500m"

ports:

- containerPort: 9376

---

apiVersion: v1

kind: Service

metadata:

name: hostnames-server

spec:

type: NodePort

selector:

app: hostnames

ports:

- port: 80

targetPort: 9376

nodePort: 31122

# kubectl apply -f hostnames.yaml

(3)查看新建的后端服务pod

# kubectl get pods

NAME READY STATUS RESTARTS AGE

hostnames-5d688d6cc5-6w9zz 1/1 Running 0 7m

hostnames-5d688d6cc5-98s6l 1/1 Running 0 7m

hostnames-5d688d6cc5-q6625 1/1 Running 0 7m3、部署ingress

(1)编写ingress的配置清单

# vim ingress-hostname.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: ingress-hostname

namespace: default

spec:

ingressClassName: nginx # 缺少这行,会导致策略无法写入controller

rules:

- host: qwq.quwenqing.com

http:

paths:

- backend:

service:

name: hostnames-server

port:

number: 80

path: /v1

pathType: Prefix

# kubectl apply -f ingress-hostname.yaml

# kubectl get ingress

NAME CLASS HOSTS ADDRESS PORTS AGE

ingress-hostname nginx qwq.quwenqing.com 80 7m(2)查看ingress-hostname的详细信息

# kubectl describe ingress ingress-hostname

Name: ingress-hostname

Labels:

Namespace: default

Address:

Ingress Class: nginx

Default backend:

Rules:

Host Path Backends

---- ---- --------

qwq.quwenqing.com

/v1 hostnames-server:80 (192.168.141.219:9376,192.168.141.226:9376,192.168.244.186:9376)

Annotations:

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 45s (x2 over 123m) nginx-ingress-controller Scheduled for sync

Normal Sync 45s (x2 over 123m) nginx-ingress-controller Scheduled for sync

# kubectl get pods -n ingress-nginx

NAME READY STATUS RESTARTS AGE

ingress-nginx-controller-2vq76 1/1 Running 0 124m

ingress-nginx-controller-n5kq8 1/1 Running 0 123m (3)进入nginx-ingress-controller进行查看是否注入了nginx的配置

# kubectl exec -n ingress-nginx -it ingress-nginx-controller-2vq76 -- /bin/bash

bash-5.1$ cat /etc/nginx/nginx.conf | grep quwenqing

## start server qwq.quwenqing.com

server_name qwq.quwenqing.com ;

## end server qwq.quwenqing.com

bash-5.1$ cat /etc/nginx/nginx.conf

......

## start server qwq.quwenqing.com

server {

server_name qwq.quwenqing.com ;

listen 80 ;

listen 443 ssl http2 ;

set $proxy_upstream_name "-";

ssl_certificate_by_lua_block {

certificate.call()

}

location /v1/ {

set $namespace "default";

set $ingress_name "ingress-hostname";

set $service_name "hostnames-server";

set $service_port "80";

set $location_path "/v1";

set $global_rate_limit_exceeding n;

......(4)进行访问

Via the host network:

curl -i -v -H 'Host: qwq.quwenqing.com' http://node1_ip/v1

Over a NodePort Service:

curl -i -v -H 'Host: qwq.quwenqing.com' http://node1_ip:31080/v1

增加tomcat服务

(1)编写tomcat的配置清单文件

# vim tomcat-demo.yaml

apiVersion: v1

kind: Service

metadata:

name: tomcat

namespace: default

spec:

selector:

app: tomcat

release: canary

ports:

- name: http

targetPort: 8080

port: 8080

- name: ajp

targetPort: 8009

port: 8009

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat-deploy

namespace: default

spec:

replicas: 3

selector:

matchLabels:

app: tomcat

release: canary

template:

metadata:

labels:

app: tomcat

release: canary

spec:

containers:

- name: tomcat

image: tomcat:8.5.34-jre8-alpine

#此镜像在dockerhub上进行下载,需要查看版本是否有变化,hub.docker.com

ports:

- name: http

containerPort: 8080

name: ajp

containerPort: 8009

# kubectl get pods

NAME READY STATUS RESTARTS AGE

tomcat-deploy-6dd558cd64-b4xbm 1/1 Running 0 3m

tomcat-deploy-6dd558cd64-qtwpx 1/1 Running 0 3m

tomcat-deploy-6dd558cd64-w7f9s 1/1 Running 0 5m(2)进入tomcat的pod中进行查看是否监听8080和8009端口,并查看tomcat的svc

# kubectl exec tomcat-deploy-6dd558cd64-b4xbm -- netstat -tnl

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 127.0.0.1:8005 0.0.0.0:* LISTEN

tcp 0 0 0.0.0.0:8009 0.0.0.0:* LISTEN

tcp 0 0 0.0.0.0:8080 0.0.0.0:* LISTEN

# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

......

tomcat ClusterIP 10.104.158.148 8080/TCP,8009/TCP 28m (3)编写tomcat的ingress规则,并创建ingress资源

# vim ingress-tomcat.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: tomcat

namespace: default

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

ingressClassName: nginx

rules:

- host: tomcat.quwenqing.com #主机域名

http:

paths:

- path:

backend:

serviceName: tomcat

servicePort: 8080

# kubectl apply -f ingress-tomcat.yaml

ingress.extensions/tomcat created(4)查看ingress具体信息

# kubectl get ingress

NAME HOSTS ADDRESS PORTS AGE

tomcat tomcat.quwenqing.com 80 5s

(5)总结

从前面的部署过程中,可以再次进行总结部署的流程如下:

①下载Ingress-controller相关的YAML文件,并给Ingress-controller创建独立的名称空间;

②部署后端的服务,如myapp,并通过service进行暴露;

③部署Ingress,进行定义规则,使Ingress-controller和后端服务的Pod组进行关联。

本次部署后的说明图如下:

构建TLS站点

(1)准备证书

# openssl genrsa -out tls.key 2048

Generating RSA private key, 2048 bit long modulus

.......+++

.......................+++

e is 65537 (0x10001)

# openssl req -new -x509 -key tls.key -out tls.crt -subj /C=CN/ST=Beijing/L=Beijing/O=DevOps/CN=tomcat.quwenqing.com(2)生成secret

# kubectl create secret tls tomcat-ingress-secret --cert=tls.crt --key=tls.key

secret/tomcat-ingress-secret created

# kubectl get secret

NAME TYPE DATA AGE

default-token-j5pf5 kubernetes.io/service-account-token 3 39d

tomcat-ingress-secret kubernetes.io/tls 2 9s

# kubectl describe secret tomcat-ingress-secret

Name: tomcat-ingress-secret

Namespace: default

Labels:

Annotations:

Type: kubernetes.io/tls

Data

====

tls.crt: 1294 bytes

tls.key: 1679 bytes (3)创建ingress

# kubectl explain ingress.spec

# kubectl explain ingress.spec.tls

# vim ingress-tomcat-tls.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: ingress-tomcat-tls

namespace: default

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

ingressClassName: nginx

tls:

- hosts:

- tomcat.quwenqing.com

secretName: tomcat-ingress-secret

rules:

- host: tomcat.quwenqing.com

http:

paths:

- path:

backend:

serviceName: tomcat

servicePort: 8080

# kubectl apply -f ingress-tomcat-tls.yaml

ingress.extensions/ingress-tomcat-tls created

# kubectl get ingress

NAME HOSTS ADDRESS PORTS AGE

ingress-tomcat-tls tomcat.quwenqing.com 80, 443 5s

tomcat tomcat.quwenqing.com 80 1h

# kubectl describe ingress ingress-tomcat-tls

(4)访问测试: https://tomcat.quwenqing.com:30443

参考:https://s2.51cto.com/images/blog/202205/10000428_62793b8c5622d57858.png?x-oss-process=image/watermark,size_16,text_QDUxQ1RP5Y2a5a6i,color_FFFFFF,t_30,g_se,x_10,y_10,shadow_20,type_ZmFuZ3poZW5naGVpdGk=